Cut your AI data infrastructure costs by up to 30% and boost analytics performance by 2.4x. Learn how AWS Redshift RG instances with Graviton and integrated data lake query engines can accelerate your AI initiatives and deliver rapid ROI.

Your enterprise is investing heavily in AI, but are your underlying data systems ready to support that ambition efficiently? Many businesses find their AI initiatives throttled by slow, expensive, and outdated data infrastructure. This isn't just a technical hurdle; it's a significant drain on your budget, delaying insights and hindering your competitive edge. The hidden cost of inefficient data infrastructure for AI can silently erode your ROI, turning promising projects into costly bottlenecks.

The Staggering Cost of Stagnant Data Infrastructure

Imagine your data warehouse struggling under the weight of growing datasets and complex AI model training queries. Each minute of slow processing, every extra dollar spent on compute resources, translates into tangible business losses. For an enterprise processing terabytes of data daily, an inefficient setup could mean:

- $10,000 - $50,000+ per month in unnecessary cloud compute costs: Older instance types or suboptimal configurations are less efficient, consuming more resources for the same workload.

- Weeks of delayed insights and model deployment: Slow queries mean data scientists wait longer, and critical business decisions are postponed, costing market opportunities.

- Increased operational overhead: Teams spend more time managing performance issues and optimizing existing infrastructure instead of innovating.

- Diminished competitive advantage: Competitors with optimized data pipelines can react faster to market changes, leaving you behind.

The cost of *not* acting is substantial. It's not just about what you spend, but what you *fail to gain* in terms of speed, agility, and innovation. An unoptimized data infrastructure can make your advanced AI models feel like they're running in slow motion, directly impacting your bottom line.

Transform Your AI Data Platform: AWS Redshift RG & Graviton Power

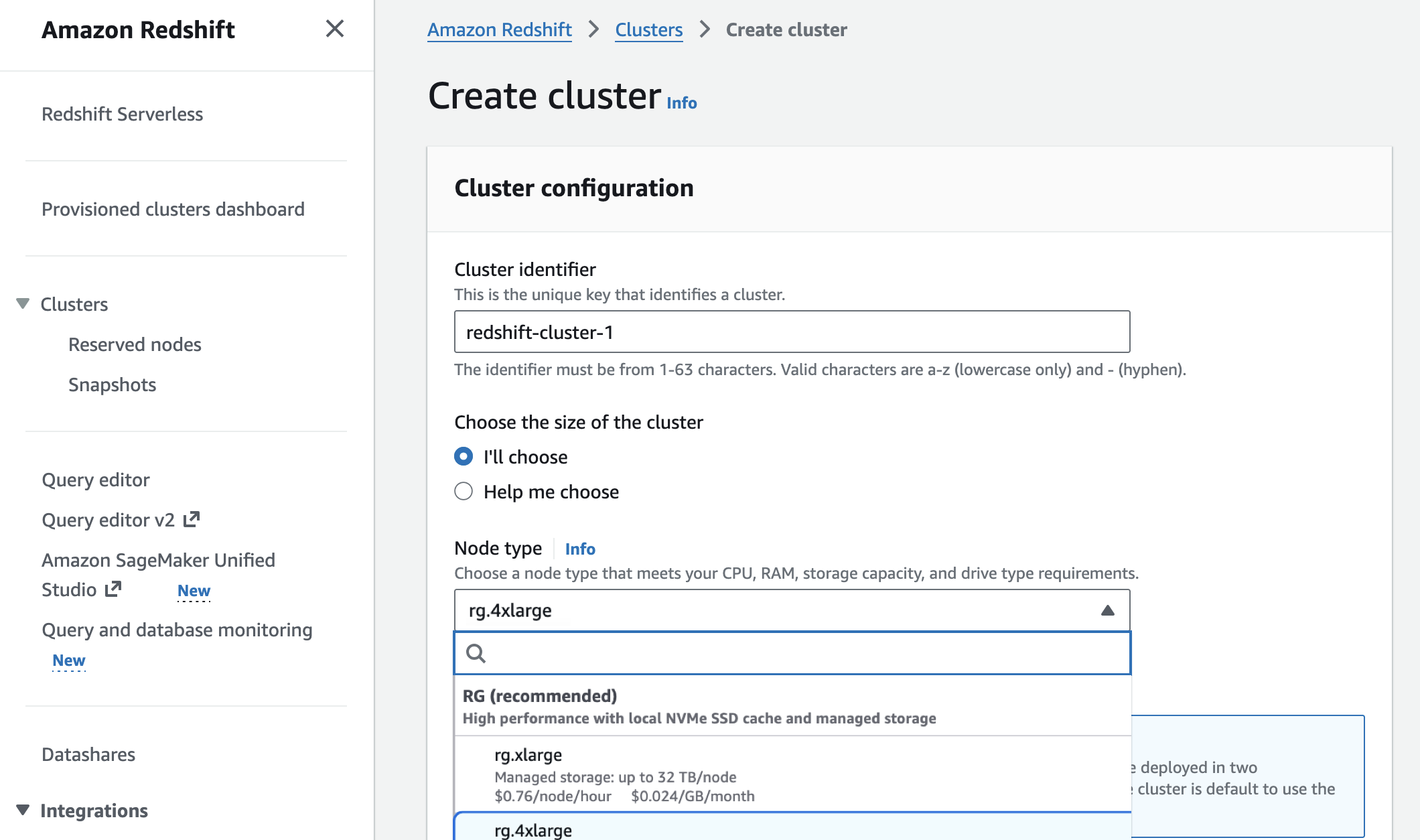

The solution lies in embracing modern, purpose-built data infrastructure that scales efficiently and integrates seamlessly with your AI workflows. Amazon Redshift has just announced a game-changer: new RG instances powered by AWS Graviton processors, featuring an integrated data lake query engine. This isn't just an incremental update; it's a fundamental shift that promises dramatic improvements for your data warehousing and AI initiatives.

Unleashing Graviton for AI Workloads

AWS Graviton processors are custom-designed by Amazon to deliver the best price-performance for cloud workloads. By migrating to Graviton-based Redshift RG instances, businesses can now achieve:

- Up to 2.4x faster data warehouse and data lake workloads: Compared to previous-generation RA3 instances, your queries, ETL processes, and analytical tasks run significantly quicker. This directly accelerates your AI model training, feature engineering, and real-time inference pipelines.

- Up to 30% lower price per vCPU: This is a direct, quantifiable cost reduction. Imagine cutting your monthly cloud data warehousing bill by nearly a third, without sacrificing performance – in fact, *improving* it.

These aren't hypothetical gains. These are concrete improvements that translate directly into reduced operational costs and accelerated time-to-insight for your most demanding AI applications.

Seamless Data Lake Integration with Apache Iceberg

Modern AI often relies on massive datasets stored in data lakes (e.g., Amazon S3). Traditionally, integrating a data warehouse with a data lake required complex ETL processes, data duplication, and synchronization challenges. Redshift RG instances address this with an integrated data lake query engine that supports open table formats like Apache Iceberg.

This means your Redshift cluster can now:

- Query data directly in your S3 data lake without moving it.

- Benefit from the schema evolution, ACID transactions, and partition evolution capabilities of Iceberg tables, making your data lake more reliable and manageable.

- Consolidate your analytics strategy: Use Redshift for high-performance SQL analytics on structured data and directly query your raw, semi-structured data in the data lake, all from a single platform.

This streamlined integration is critical for AI. It allows data scientists to access a broader range of data faster and with greater consistency, enabling more robust model training and feature development without the overhead of complex data pipelines.

Practical Implementation: Code Examples

Implementing Redshift RG instances and integrating with your data lake requires careful planning and execution. Here’s a glimpse into what a setup might look like for external tables with Apache Iceberg.

1. Creating an External Schema for Your Data Lake

First, you define an external schema in Redshift that points to your AWS Glue Data Catalog, which contains metadata for your Iceberg tables in S3.

CREATE EXTERNAL SCHEMA IF NOT EXISTS data_lake_schema

FROM DATA CATALOG

DATABASE 'your_glue_database_name'

IAM_ROLE 'arn:aws:iam::123456789012:role/RedshiftS3AccessRole'

CREATE EXTERNAL DATABASE IF NOT EXISTS;

The RedshiftS3AccessRole IAM role must have permissions to access your S3 buckets and Glue Data Catalog.

2. Querying an Apache Iceberg Table

Once the external schema is set up, you can query your Iceberg tables directly from Redshift using standard SQL. This example shows querying a hypothetical customer_interactions table stored in Iceberg format in your data lake:

SELECT

ci.customer_id,

COUNT(ci.event_id) AS total_interactions,

AVG(ci.duration_seconds) AS avg_interaction_duration

FROM data_lake_schema.customer_interactions ci

WHERE ci.event_date >= '2026-01-01'

GROUP BY 1

ORDER BY total_interactions DESC

LIMIT 100;

This query leverages Redshift's powerful query engine to analyze vast amounts of data stored in your data lake, without the need for cumbersome ETL processes into Redshift itself. For AI teams, this means rapid experimentation with large datasets for model training and feature engineering.

Why You Need Expert Implementation

While the benefits are clear, migrating to new instance types, optimizing Redshift configurations, and properly integrating with a data lake (especially with complex formats like Iceberg) is not a DIY task for most enterprises. It requires deep expertise in AWS Redshift, Graviton optimization, data lake architecture, IAM best practices, and performance tuning. An improperly executed migration can lead to downtime, data inconsistencies, or fail to deliver the promised cost and performance benefits.

This is where an expert AI agency becomes invaluable. We Do IT With AI specializes in:

- Assessment & Planning: Analyzing your current infrastructure, identifying bottlenecks, and crafting a tailored migration strategy.

- Seamless Migration: Executing the transition to Redshift RG instances with minimal disruption to your operations.

- Performance Optimization: Tuning your Redshift cluster and queries for maximum efficiency and cost savings with Graviton.

- Data Lake Integration: Setting up and optimizing your data lake for seamless interaction with Redshift, leveraging formats like Apache Iceberg for AI workloads.

- AI-Ready Data Pipelines: Ensuring your data infrastructure is perfectly aligned to support your machine learning models and analytical applications.

Mini Case Study: Accelerating Insights for an E-commerce Innovator

A rapidly growing e-commerce company faced escalating AWS Redshift costs and slow report generation, hindering their ability to analyze customer behavior and optimize marketing campaigns with AI. Their data scientists spent days waiting for large queries to complete, impacting the agility of their recommendation engine development. We Do IT With AI stepped in, assessing their Redshift workload and data lake strategy. We executed a migration to new Redshift RG instances powered by Graviton and optimized their external tables to leverage Apache Iceberg for historical data in S3. The results were dramatic: a 32% reduction in Redshift operational costs within three months, and a 2.8x acceleration in critical data science queries. This enabled them to deploy new AI-driven marketing campaigns weeks faster, directly boosting customer engagement and revenue.

FAQ

How long does implementation take?

The timeline for migrating to Redshift RG instances and optimizing data lake integration typically ranges from 4 to 8 weeks, depending on the complexity of your existing data infrastructure, the volume of data, and specific integration requirements. Our process includes initial assessment, planning, phased migration, and rigorous testing to ensure a smooth transition with minimal downtime.

What ROI can we expect?

Clients can expect significant ROI from reduced operational costs and accelerated data processing. Direct cost savings from Graviton-based instances can be up to 30% on compute, while performance gains of up to 2.4x can drastically reduce the time-to-insight for AI and business intelligence. For many enterprises, these savings and efficiency gains lead to the project paying for itself within 3 to 6 months through direct cost reduction and enhanced business agility.

Do we need a technical team to maintain it?

While Redshift is a managed service, optimizing its performance and ensuring efficient data lake integration requires ongoing expertise. After initial implementation, our team can provide training for your internal staff or offer managed services to ensure your data infrastructure remains performant, cost-optimized, and aligned with your evolving AI strategy. This includes monitoring, performance tuning, and scaling as your business grows.

Ready to implement this for your business? Book a free assessment at WeDoItWithAI

Original source

aws.amazon.comGet the best tech guides

Tutorials, new tools, and AI trends straight to your inbox. No spam, only valuable content.

You can unsubscribe at any time.